More than alerts.

The postmortem writes itself.

Alerts from across your stack flow into one place. Claude writes the postmortem from every signal it saw.

Free forever for 1 project · No credit card required

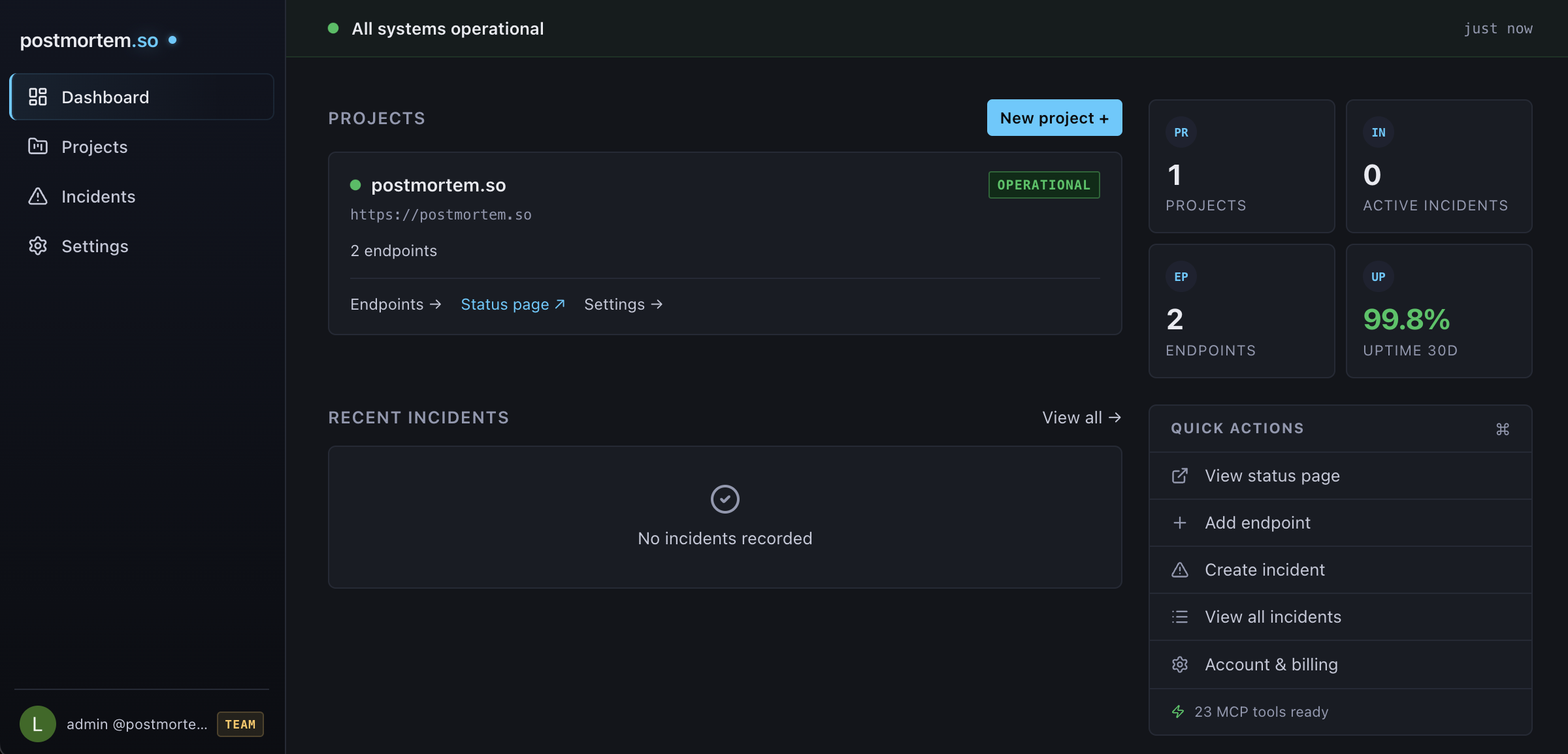

Connect your monitoring tools, manage incidents, generate AI postmortems — all from one dashboard.

Works with your stack

Every tool in your stack.

One place for the story.

Route alerts from the tools you already use. postmortem.so creates incidents and generates postmortems from the full context — error details, metric values, stack traces.

Sentry

Error alerts, stack traces

Grafana

Metric alerts, threshold breaches

Better Stack

Uptime alerts, incident webhooks

Generic Webhook

Any tool via JSON webhook

PagerDuty

On the roadmap

Or use our built-in endpoint monitoring — 1-minute HTTP checks, no setup required.

See it in action

This postmortem wrote itself.

The AI read the check history and timeline updates — then wrote this in 3.8 seconds.

Summary

A DNS resolver failure at Vercel's edge layer caused intermittent latency spikes on postmortem.so. Requests that missed the DNS cache fell through to a secondary nameserver with a 10-second timeout, producing response times of 8–12 seconds against a baseline under 200ms. Resolved after Vercel flushed the DNS cache on affected eu-central-1 edge nodes. No HTTP errors — all requests returned 200.

Timeline

Root Cause

The DNS TTL for postmortem.so expired on Vercel's eu-central-1 edge nodes. The resolver fell back to a secondary nameserver with a 10-second timeout. Requests hitting cached DNS resolved normally — the degradation was intermittent, not a total outage.

Impact

- ~6 minutes of degraded performance

- Zero HTTP errors (all requests returned 200)

- ~30% of requests at 8–12 second response times

- Scope limited to eu-central-1 edge nodes

Action Items

| Priority | Action | Owner |

|---|---|---|

| High | Extend DNS TTL to reduce cache miss frequency | Infrastructure |

| High | Add DNS resolution latency as a monitoring signal | Observability |

| Medium | Configure fallback nameservers with shorter timeout | Infrastructure |

| Low | Set up multi-region synthetic checks | Observability |

Always on

Every day, not just bad days.

You don't have incidents every day. But you do have a public status page every day. You do have webhooks flowing from your monitoring stack. You do have a team that might need to spin up an incident at 3am. postmortem.so is there for all of it — and when the incident ends, the postmortem writes itself.

How it works

From outage to postmortem

in under 5 minutes.

Connect

Your tools or ours

Point your existing monitoring at postmortem.so via webhook — Sentry, Grafana, or any tool that sends alerts. Or use our built-in endpoint monitoring with 1-minute checks. Either way, incidents flow into one place.

Detect

Incidents create themselves

When an alert fires — from a Sentry error spike, a Grafana threshold breach, or 3 consecutive endpoint failures — an incident opens automatically. Your team is alerted via email and Slack. Your status page updates in real-time.

Generate

AI postmortem in under 5 seconds

Resolve the incident. The AI reads every signal — check history, webhook payloads, error details, timeline updates — and writes a structured postmortem. Summary, timeline, root cause, impact, action items. One click pushes action items to Linear or GitHub.

MCP integration

Manage incidents

from your editor.

postmortem.so speaks MCP. Create incidents, resolve them, and generate postmortems from Claude, Cursor, or any MCP client — without switching tabs. 25 tools available, including pushing action items straight to Linear.

Pricing

Simple, transparent pricing.

Start free. Upgrade when you need AI postmortems or more projects.

Free

For personal projects and side hustles.

Free tier limited to the first 200 accounts while we keep infrastructure costs sustainable.

- 1 project

- 5 endpoints

- Public status page

- Incident timeline

- Slack webhook alerts

- 5-minute check interval

Pro

Billed €190/year — 2 months free

For teams that ship fast and recover faster.

- 10 projects, 50 endpoints

- AI-generated postmortems

- Webhook ingestion (Sentry, Grafana, generic)

- MCP integration (25 tools)

- Email + Slack alerts (500 subscribers)

- Custom domain

- 1-minute check interval

- Priority support

Team

Billed €490/year — 2 months free

For engineering organisations with compliance requirements.

- Everything in Pro

- 200 endpoints

- Up to 10 team members

- 2,000 email subscribers

- 500 webhooks/hour

- White-label branding

- SLA monitoring

- Audit logs

- SSO (coming soon)

All plans include: public status page, incident timeline, Slack alerts, and read-only API access. Pro and Team add webhook ingestion from Sentry, Grafana, and any monitoring tool.